Modernizing Risk Management

Self-Empowered Public

By: Ralph Nikischer

I don’t go through a day without hearing something about artificial intelligence (AI) and how it will disrupt everything. Recently, ChatGPT has been at the center of AI conversation. In this Perspective, I decided to put this technology to the test and see if it can support local government.

Quick Summary

I scored the results of my tests from not useful to very useful. Here is a quick summary with detailed results below.

- Creating bid specifications was somewhat useful.

- Answering resident questions was minimally useful.

- Creating codes/ordinance was somewhat useful.

- Collective bargaining interpretation was minimally useful.

The bottom line, ChatGPT can help local government draft text. Whether that is bid specifications, an ordinance, or a code, the technology is helpful getting started. Without question the text that is created needs to be edited and tailored to the specifics of the community or project.

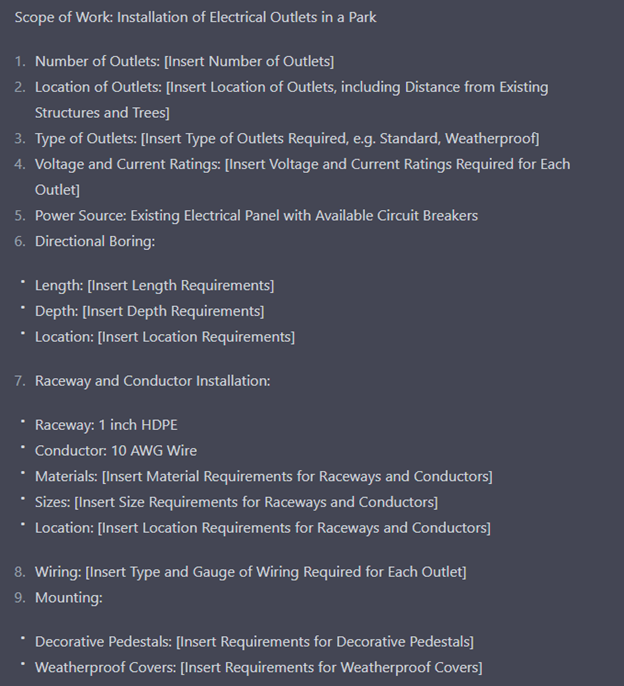

Test Case 1: Creating bid specifications

Somewhat useful

Context

I provided ChatGPT a summary of what I was looking to accomplish using a real project I completed a few years ago. The scope of the project was to run outlets to the base of every tree in a park to light them for the holidays. I asked ChatGPT to create bid specifications for the project. Although, it didn’t have every detail for the specific project, it outlined a reasonably decent set of things to include in the bid.

Result

Test Case 2: Answering resident questions

Minimally useful

Context

I provided the ChatGPT an address and asked what day garbage was collected. It was unable to return the specific day but was able instruct where I could find this information. Structured data and tools like Community-Portal provide a much better experience.

Result

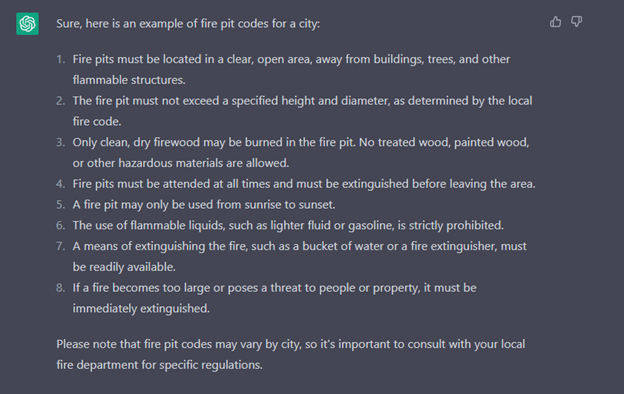

Test Case 3: Code/Ordinance text

Somewhat useful

Context

I simply asked ChatGPT to draft a city code for fire pits.

Result

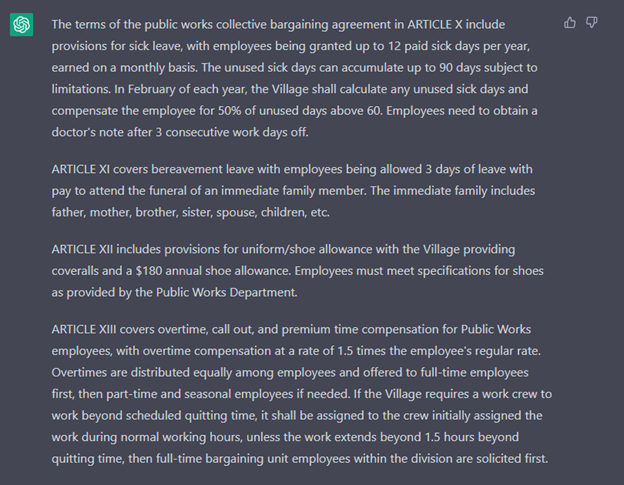

Test Case 4: Collective bargaining interpretation

Minimally useful

Context

I asked ChatGPT to review a couple of sections of a public works collective bargaining agreement. I then asked questions related to the agreement. While, it was able to respond with basic information, it missed returning more complex scenarios. It did provide a decent and more concise summary of the sections of the agreement.

Result